Debugging Vapi Calls: The Complete Guide to Finding Root Causes Fast

A step-by-step guide to diagnosing failed or poor-quality Vapi calls — from reading Vapi logs, to identifying handoff failures, to correlating against your downstream systems.

TL;DR — The short answer

- 1

Vapi failures fall into 4 categories: connection failures, LLM/TTS handoff failures, function call execution failures, and transfer/escalation failures — each has a distinct signature in the Vapi call object.

- 2

The Vapi call object is the single best starting point for any debugging session — it contains status, endedReason, per-stage latency breakdown, tool call results, and the full conversation messages array.

- 3

Most 'silence' issues in Vapi are LLM response latency exceeding Vapi's endpointing threshold — not audio failures. The llmLatency field in the call object confirms this within seconds.

- 4

Cross-provider correlation (Vapi + Twilio + your backend) is almost always required for root cause analysis on complex failures — no single provider's log tells the complete story.

The Vapi call lifecycle: what happens at each stage and what can fail

Reading the Vapi call object — every field that matters

GET /call/{id}) and is also delivered in the end-of-call-report webhook payload. It contains everything you need for initial diagnosis.status field tells you the final state: ended is normal termination, failed indicates a Vapi-level error before the call could complete.endedReason field is the most important field for debugging. Key values to know: customer-ended-call — the caller hung up normally; assistant-ended-call — your agent terminated the call intentionally (check your assistant's endCallPhrases or endCallFunctionEnabled); voicemail — the call reached a voicemail recording; silence-timed-out — no caller audio was detected within the configured silenceTimeoutSeconds; max-duration-exceeded — the call hit your maxDurationSeconds limit; pipeline-error-* — a provider-level error in the Vapi processing pipeline.latency object breaks down response time per stage. Look for llm, tts, and vapi fields — if llm is the outlier (above 1,500ms on streaming responses), the silence issue is LLM latency, not TTS.messages array contains the full conversation transcript including all tool call requests and their results. For function call debugging, look for toolCallResult message types — the result field contains exactly what your function endpoint returned.cost and costBreakdown fields show the per-stage cost attribution — useful for detecting unexpectedly expensive calls caused by runaway LLM response length or model selection.The most common Vapi failure types and their diagnostic signatures

endedReason: silence-timed-out or customer-ended-call with a high llmLatency value in the latency breakdown. Fix: switch to a lower-latency model (gpt-4o-mini, claude-haiku-4-5) or shorten your system prompt to reduce first-token latency.endpointingConfig.onPunctuationSeconds and onNoPunctuationSeconds values in your assistant configuration to give the caller more silence before Vapi assumes they've finished speaking.toolCallResult message in the array with a non-success result, often followed by the agent producing a confused or generic response. Fix: check the result field — it contains the exact response your endpoint returned. The most common causes are 4xx/5xx responses from your endpoint, function timeout (default 20s), and malformed JSON responses.endedReason: transfer-failed or dial-failed. Fix: verify the transfer destination number is correctly formatted (E.164 format required), that your Twilio number has permission to make outbound calls to that number, and that the transfer destination is not a voicemail-only line.How to correlate Vapi calls with Twilio when running on Twilio infrastructure

phoneCallProviderId field (for phone calls routed through Twilio). Use this SID to pull the full Twilio call record: GET /2010-04-01/Accounts/{AccountSid}/Calls/{CallSid}.json — this gives you the Twilio-side status, duration, direction, and any error codes that Twilio logged independently.Debugging checklist: 15-minute incident triage for Vapi calls

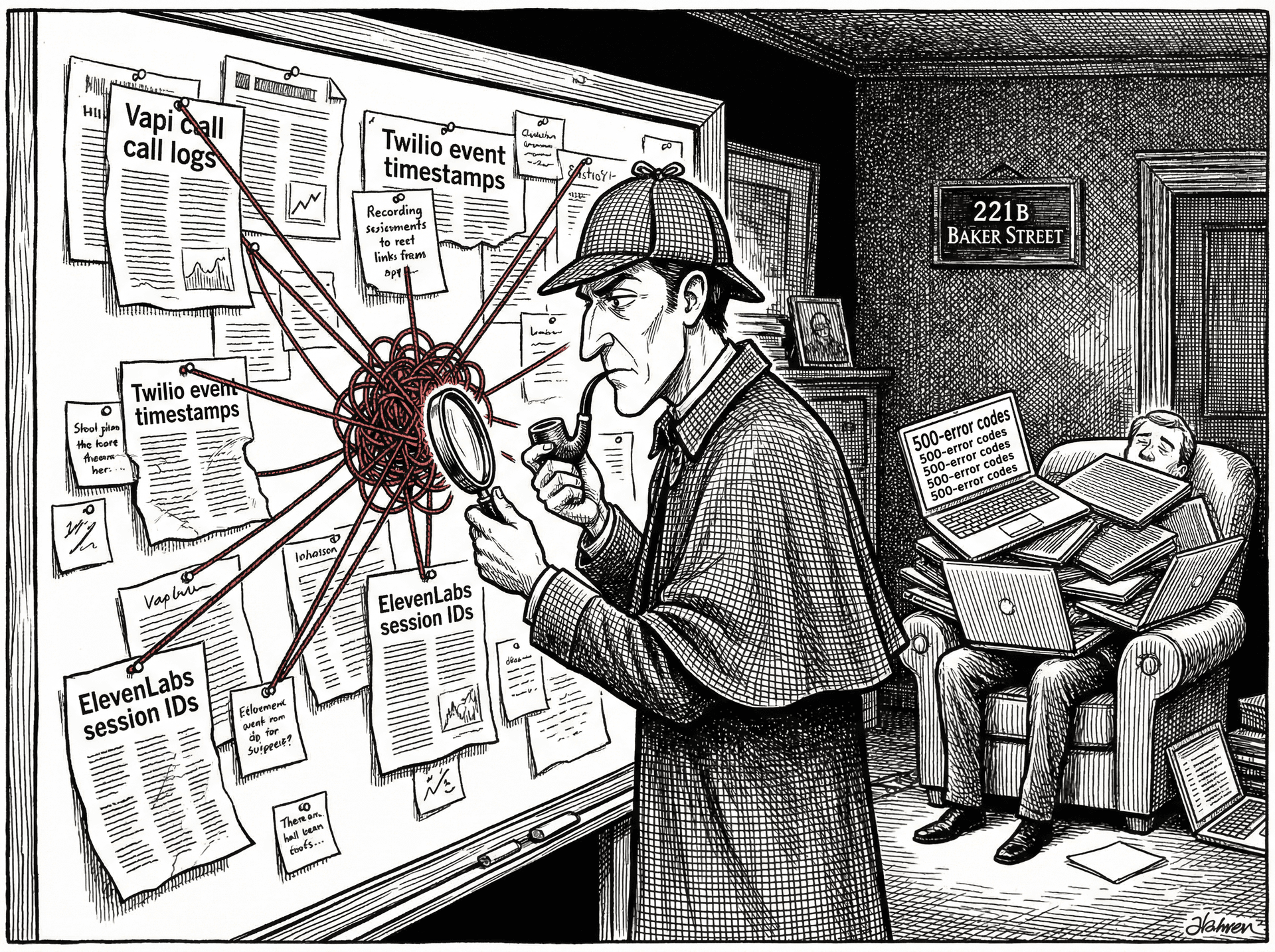

GET /call/{id} from the Vapi API. Read endedReason first. If it's customer-ended-call, check call duration — under 10 seconds is suspicious, over 30 seconds with a normal conversation flow is probably a genuine hang-up. If it's pipeline-error-*, the problem is in Vapi's infrastructure. If it's silence-timed-out, check llmLatency.llmLatency is the outlier, the fix is model speed or prompt length. If ttsLatency is the outlier, check text length in the messages array. If vapi latency is the outlier, check Vapi's status page — this indicates a platform-level issue.toolCallResult entries — any non-success result is a function call failure that contributed to the incident.phoneCallProviderId. Check Twilio call status, duration, and any error codes. For transfers, pull the outbound dial SID.Automating Vapi call forensics with Sherlock

endedReason classification, the latency breakdown by stage, the relevant messages from the conversation, and the cross-referenced Twilio call record — everything from the 15-minute checklist, in under 60 seconds.See how Sherlock compares

Frequently asked questions

Why does Vapi show endedReason: customer-did-not-give-microphone-permission?

This endedReason fires when the browser (in web-based Vapi integrations) cannot access the microphone. It is almost always a browser permissions issue rather than a Vapi configuration problem. The caller either denied microphone access when prompted, or the site is served over HTTP instead of HTTPS (microphone access requires a secure context in all modern browsers). Check that your domain is served over HTTPS and that your integration correctly triggers the browser permission prompt before initiating the Vapi session.

How do I debug Vapi function calling failures?

Function call failures in Vapi appear in the call object's messages array as a toolCallResult with a result field containing an error. The most common causes are: the function endpoint returns a non-2xx response (Vapi considers anything other than 200 a failure), the function takes longer than Vapi's configured function timeout (default 20 seconds), or the function returns a malformed JSON response that Vapi cannot parse. Check the toolCallResults in the Vapi call object first — the result field will contain the exact response your endpoint returned, which is usually enough to identify the bug.

Why is my Vapi agent silent sometimes?

Silence after a user speaks is almost always an LLM response latency issue, not an audio failure. Vapi uses endpointing to detect when the user has finished speaking — once endpointing fires, Vapi sends the conversation to the LLM and waits for a response. If the LLM takes longer than expected (typically over 1.5 seconds for streaming responses), the caller hears silence. Check your Vapi call object's latency breakdown by stage. If llmLatency is the outlier, the fix is a faster model (GPT-4o mini, Claude Haiku) or a shorter system prompt that reduces first-token latency.

Ready to investigate your own calls?

Connect Sherlock to your voice providers in under 2 minutes. Free to start — 100 credits, no credit card.